Rapid Current vs. Dead Calm — What You See When You Compare

My work involves market analysis. B2B SaaS, fintech, healthcare — the terrain changes every quarter. New entrants arrive, incumbents consolidate, and last year's correct answer becomes this year's reason for losing. Living in that world trains you to see markets that do not move.

Looking at 20 years of psychedelic trance data, that eye responded.

- ·New entrants every quarter

- ·Player roster changes constantly

- ·Technology destroys consumption patterns

- ·Last year's top is this year's third

- ·Top artists: nearly unchanged

- ·Chord progressions: Am–G–F–E, fixed

- ·BPM: converged at 138±5

- ·New disruptors: absent

This is not a niche. This is a vacant space where nobody has recognized it as a battlefield.

The True Nature of "What Is This?"

It arrived first as fear. The reading: "a market that doesn't move has no demand — entering is pointless." Five seconds later, it inverted.

No movement → no demand → entry is meaningless

Top roster unchanged → no challengers have arrived → nobody has recognized it as a battlefield → the space of whoever recognizes it first

The question "do I have talent" presupposes a comparison target. Stand at a coordinate where no comparison target exists, and the question becomes invalid. Nagi was not a warning. It was a vacancy notice.

I Discarded All My Own Thinking

I paused here.

"I found the dead calm — I will enter here." That judgment is my own thinking. And my own thinking has been wrong many times. 180 songs, none broke through. Two prototypes, neither finished.

So I decided to discard all of it. No premise of my own cognition. Not "what I believe is correct" — instead, find "the point where multiple independent intelligences converge."

Building the Five-LLM Stream

OpenAI, Anthropic, Perplexity, Vertex (Gemini), Gemini Flash. Send the same question to all of them simultaneously, line up the responses, find the convergence point. I built that stream.

This is not about "choosing the best AI." Any single model starts saying incoherent things once context deepens. The problem is not individual model quality. It is taking an answer from a single perspective in the first place.

Alone: Incoherent. Together: Convergent.

Running it made certain things obvious.

Models do not pay the cost of "expressing uncertainty." Confidence and accuracy are separate things.

Agreement points indicate facts that exist in the shared training data of all models. These are reliable.

Do not reject the outlier. Ask why it differs — this is the origin of the veto right.

The critical point is "do not reject outliers." This connects directly to TRIVIUM's veto design — the U(p) → 0 veto structure — implemented later.

This Is the Origin of TRIVIUM

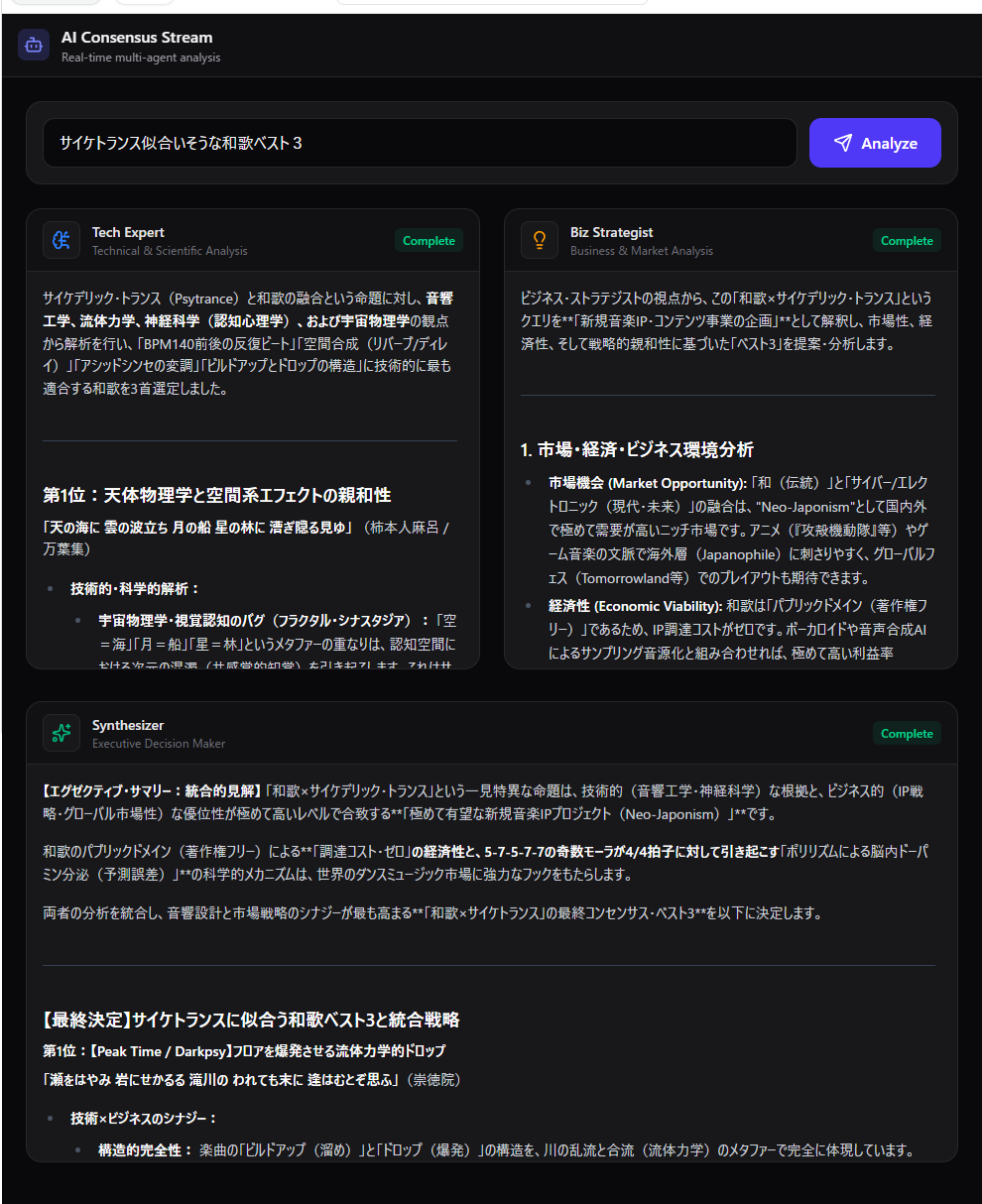

Query: "Best 3 waka poems suited to psychedelic trance" — Tech Expert (acoustics/neuroscience), Biz Strategist (market analysis), Synthesizer (integrated decision) evaluate independently and output a convergence point. The direct structural origin of TRIVIUM's 3-agent architecture.

The five-LLM stream started as a crude system. Copy a prompt by hand, paste it into five browser tabs, line up the results in a spreadsheet, scan visually for convergence points — entirely manual. But by the time it evolved into this UI, the structure had been made visible.

But within that manual work, a structure became visible.

TRIVIUM is not "a design that uses three LLMs." It is "the process by which multiple independent evaluating agents converge — implemented as a system." That philosophy began in a spreadsheet with five tabs.